Bias is not something new we have been dealing with it since decades. What is new now is that AI systems that we are building at making decisions at remarkable speeds and at-scale.

Bias is no more just limited a particular demographic or geographic area but instead is now widespread and affecting people globally. This makes it critical for us to start focusing on addressing bias and scaling-up what works.

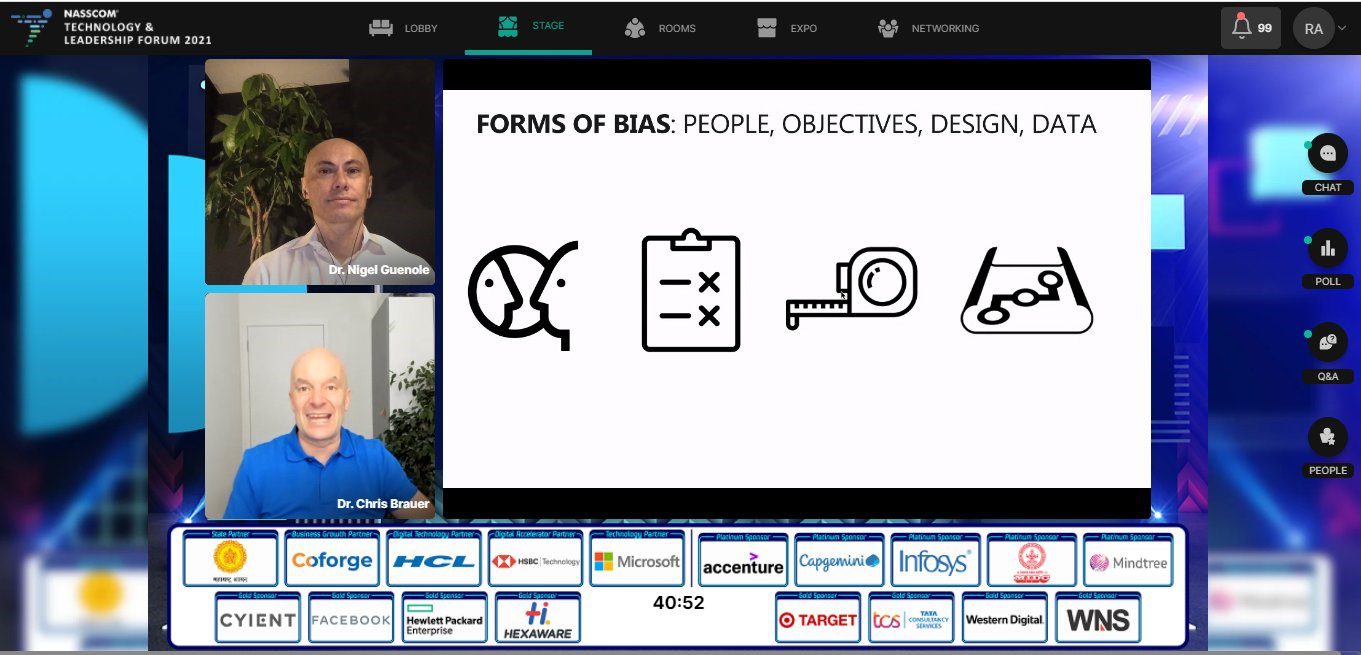

A very insightful session on Day 3 of NASSCOM Technology & Leadership Forum (NTLF) 2021 on Bias in technology: What it is, Types & Examples, How & Tools to fix it, by Nigel Guenole, Director of Research, Goldsmiths, University of London and Dr Chris Brauer, Director of Innovation, University of London.

Here are my key learnings from the session:

Before we dive into addressing bias in technology, it is imperative to understand the different forms of bias.

Forms of Bias

- People Bias – People bring their own biases both social, racial. These biases are usually subjective and dependent on the person who is designing the solution. It is critical to train people to help eliminate theses biases in to the design of the technologies

- Bias in Objectives – The COVID-19 pandemic has significantly transformed the economy; this has also made the global agenda very ethics, value and sustainability led. The idea is to ensure to not to create technologies and systems that are going to be left out of the future because they are not able to demonstrate their ability to service mankind

- Bias by Design – When we design systems, the idea is to be as inclusive as possible, and it is often critical to go to the people that are actually using a particular technology to see how best the system can address their needs

- Bias in Data – As much as we see people bias come in, as we are coding, and pulling in data from sources, building on the existing knowledge, we have to keep in mind that we are building biases from those systems within our technologies

Definitions of Bias

Different types of people define bias differently. Social scientists and machine learning professionals look at bias differently and define bias in different ways.

When it comes to the technologists or machine learning communities test the algorithm to see whether different genders, people from different ethnic backgrounds are getting similar scores and if they are not, and there is any differences between groups (or scores of hiring algorithms between different types of people), they remove the bias.

Social scientists or psychologists on the other hand are very concerned whether those differences are real or not, they don’t just remove the biases, they first check whether these differences are real or not and then take a call about whether to remove them or not. At times, they also leave the biases.

Let us see how each of the two communities address these biases. Although researchers from Google and Microsoft are working on research articles that help join these two communities, there are still differences in the way the two communities address bias.

Addressing Bias: Psychologists vs. Technologists

The technologist approach often includes pre-processing, training time constraints and post-processing while the psychologist approach comprises of changing the criteria, using score banding and affirmative action.

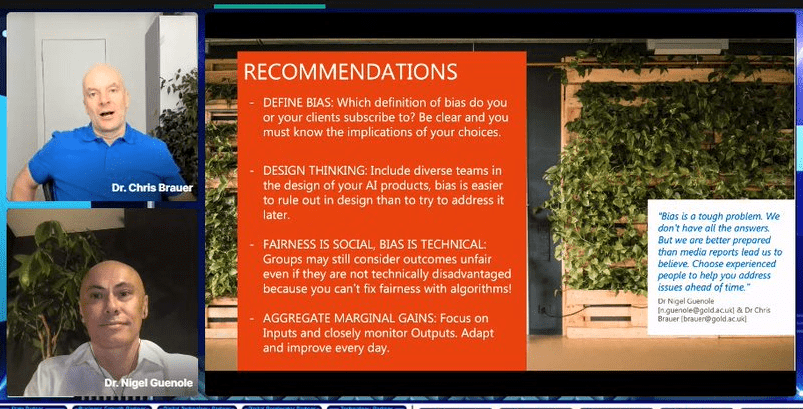

Concluding Remarks

Defining bias is a pre-curser to actually addressing bias in technology, because it means different for different people. The starting point for making bias-free systems is to first define bias for that context.

Find all these sessions on our YouTube channel, and follow our #NTLF2021 for more insights.

The post Addressing Bias in Technology – #NTLF2021 Session Key Takeaways appeared first on NASSCOM Community |The Official Community of Indian IT Industry.